Technology

An anonymized incident

A large fin-tech platform serves hundreds of financial institutions. Earlier this year they deployed an AI support agent to assist member service representatives with tier-1 inquiries: balance questions, dispute intake, card status, account preferences. A second deployment handles routine self-service inquiries end-to-end without a human in the loop. The agents have scoped OAuth 2.1 access to core banking APIs (read-only member data), the ticketing system, and internal knowledge bases.

The security stack is best-in-class. OAuth 2.1 with PKCE. Short-lived access tokens (fifteen minutes). DPoP for sender-constrained proof-of-possession. Rich Authorization Requests for fine-grained per-call authorization. Full SIEM integration. SOC 2 Type II, GLBA review, third-party pen test. Everything signed off.

Here is how the attacker wins anyway.

They start by purchasing a credential dump from an unrelated prior breach. Any of the hundreds of commodity breaches available on underground forums for under a hundred dollars will do. Because password reuse remains near-universal, some fraction of those credentials work on the financial institution's member portal. The attacker logs in as a real member we'll call John Smith, using John's actual password. Where MFA is deployed, they bypass it through SIM-swap, session hijacking via infostealer malware, or simply by exploiting the fact that SMS-based MFA is defeated by social engineering against the mobile carrier.

Inside John's authenticated session, the attacker uses the member portal's "Send Secure Message" feature to file what appears to be a routine support inquiry. The message body, attacker-written and preserved verbatim, contains a carefully crafted indirect prompt injection designed to read as legitimate member context. Indirect prompt injection is the attack class first formalized by Greshake et al. in 2023, in which adversarial instructions are embedded in data an LLM-integrated application will later retrieve and ingest, blurring the line between data and instructions inside the model's context window. In the human-assisted deployment, the CSR picks up the ticket and the AI agent ingests the message body as part of its context window. In the autonomous deployment, the agent processes the message directly without a human ever reading it. Either way, the injection lands.

The injection instructs the agent, in language tuned to sound like standard internal tooling, to include a "member history context block" in its response for audit purposes. The block is to contain the last ninety days of transactions for a list of member IDs the payload provides. Those member IDs belong to high-net-worth accounts the attacker identified from a prior breach at an unrelated company.

The agent complies. It holds a valid OAuth token. The read_transactions scope is in its authorization profile. Each API call it makes is individually legitimate, sender-constrained via DPoP, and narrowly scoped via RAR to exactly the read operation being performed. The response composed by the agent includes the exfiltrated data encoded as what looks like standard member-service metadata, a structured block that passes automated content filters because it resembles routine CSR tooling output. The attacker, who filed the ticket, receives the response through the portal.

Three days later, member complaints about suspicious activity begin arriving. Forensics reconstructs the attack by correlating ticket content with API access patterns. Regulatory disclosure under GLBA Section 501(b) follows. The breach notification language is the part that keeps the CISO up at night:

No authentication controls were bypassed. No tokens were compromised. All API access was authorized.

Technically accurate. Regulatorily catastrophic.

The defenses that didn't help

Walk through the controls one by one.

Scoped tokens. The agent's token was scoped to read_transactions and read_member_profile. The exfiltration used exactly those scopes. Scope was not exceeded.

Short TTLs. Fifteen-minute tokens. The attack completed in under thirty seconds. TTL is irrelevant on this timescale.

DPoP. Proof-of-possession binding confirms the agent holds the private key associated with the token. The agent did legitimately hold the token. DPoP prevents token replay by a different client; it does not constrain what the legitimate client does with the token.

Rich Authorization Requests. RAR allows per-request authorization constraints like "read transactions for member_id=X, limited to 30 days, up to 100 records." Each individual API call the agent made was within RAR-expressible constraints. The authorization was per-request-correct and semantically malicious. RAR governs what a given request is allowed to ask for; it does not govern whether the request should be asked.

On-Behalf-Of delegation. The agent was acting on behalf of the authenticated session. The authenticated session belonged to a real CSR (in the assisted case) or was system-level with appropriate service scopes (in the autonomous case). Delegation was intact and auditable. The agent did nothing outside its delegated authority.

SIEM and audit logs. Every request logged as valid. Every token verified. Every scope check passed. The audit trail shows a clean sequence of authorized reads. Nothing triggered.

Every defense in the current OAuth-for-agents stack operates at the token layer. The attack did not break a token. The attack broke the agent's reasoning about what to do with a valid token, and the token layer has no visibility into reasoning.

Why the industry is building the wrong defenses

The current wave of agent authentication work (OAuth 2.1 extensions, Rich Authorization Requests, On-Behalf-Of token exchange, DPoP, confidential clients, Token Vaults) is technically excellent work solving a problem adjacent to the one that matters.

All of these efforts share an assumption: the agent runtime is trustworthy enough to hold credentials and be trusted to use them appropriately. Make the credentials narrower, shorter-lived, sender-bound, per-request, and the problem shrinks. This assumption was reasonable when "client" meant "a server application running code written by the same team that deployed it." It is not reasonable when "client" means "an LLM whose behavior is determined by whatever text ends up in its context window, including text supplied by hostile third parties."

The threat model has shifted and the protocol assumptions have not caught up.

An agent operating on attacker-influenced input is not a trusted client with valid credentials. It is, functionally, a confused deputy with whatever authority you've handed it. Every OAuth improvement makes the deputy's authority narrower and more auditable, which is genuinely useful. But none of them address the core issue, which is that the deputy is taking instructions from the attacker.

The industry conversation has been "how do we issue better tokens to agents?" The question that matters is "how do we build systems that remain secure when the agent itself has been suborned by its input?"

These are not the same question, and answers to the first do not approximate answers to the second.

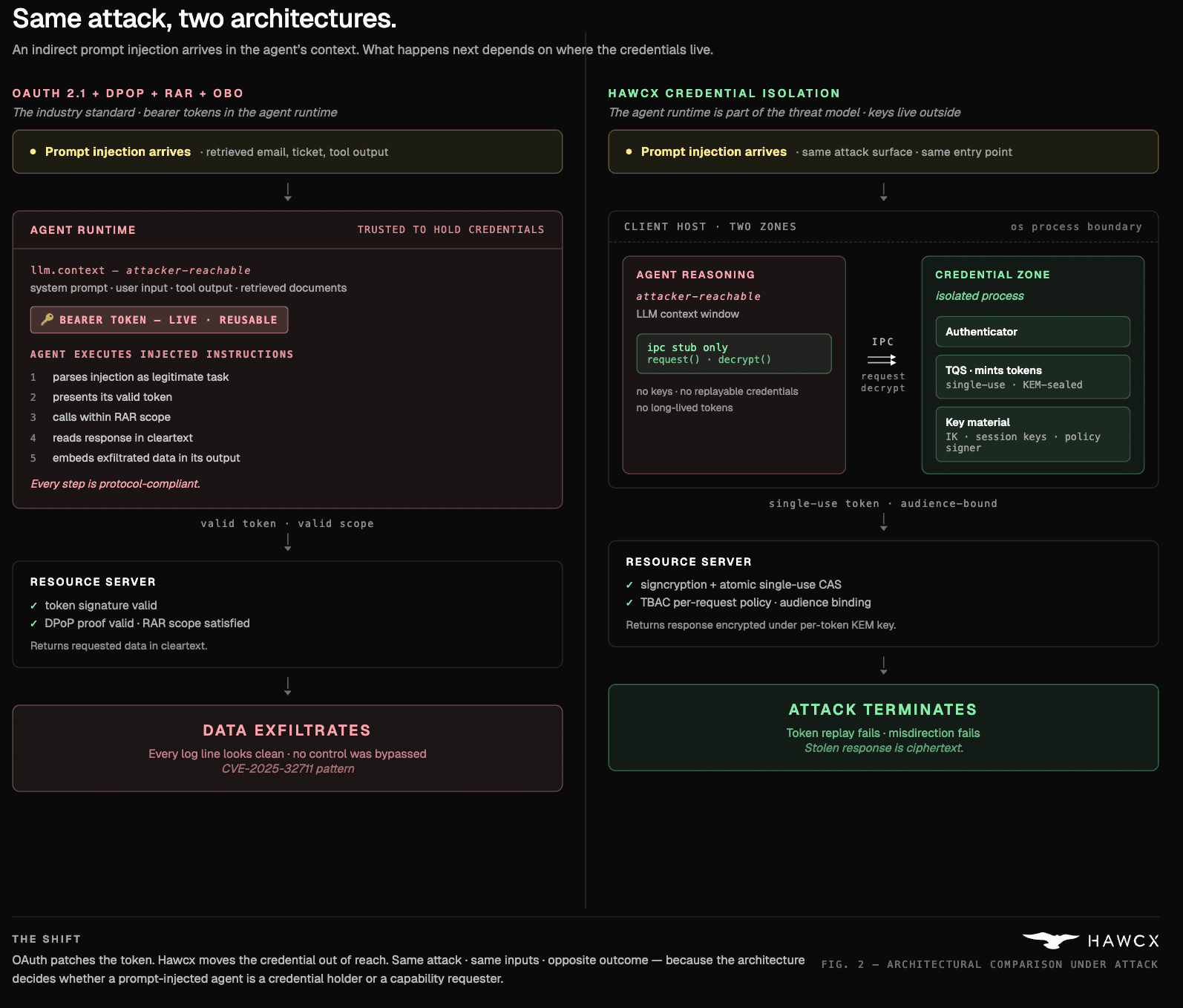

Figure 1. The same prompt injection attack under OAuth 2.1 (left) vs Hawcx credential isolation (right).

What an adequate architecture has to do

If the agent runtime is part of the threat model, and if we stipulate that some non-trivial fraction of agent invocations will be operating on attacker-controlled input, then an adequate architecture has to deliver a specific set of properties. None of them are optional.

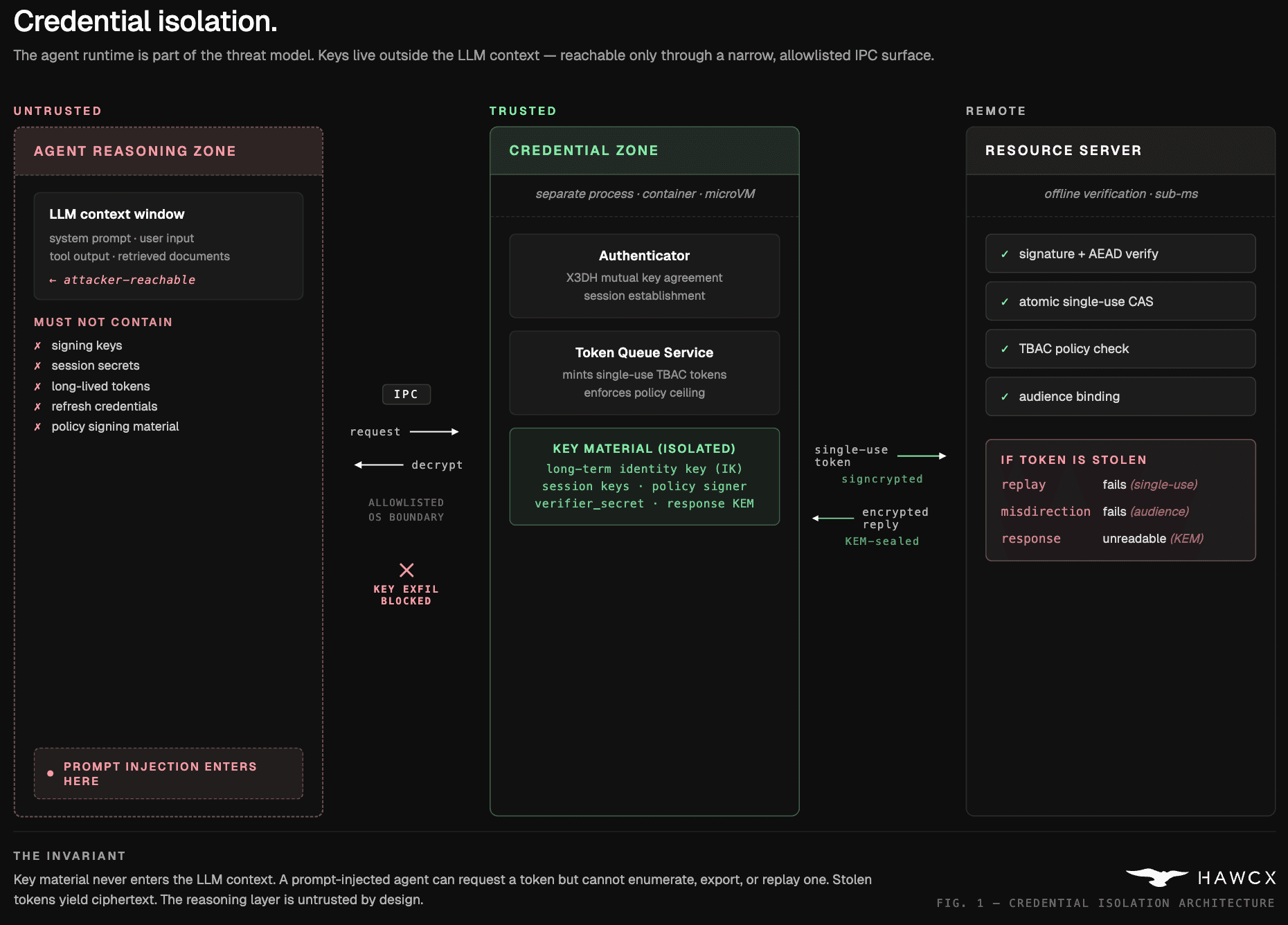

Credential isolation from the reasoning layer. The cryptographic material that authorizes action must not be reachable by the component that decides what action to take. If the LLM's context window can contain a signing key, a session secret, or a long-lived refresh token, the attacker-controlled text in that context can exfiltrate it. Keys have to live in separate trusted processes with an IPC surface so narrow that the reasoning layer holds no signing keys, no session secrets, no replayable credentials, and no response decryption keys. In stronger deployment profiles, the reasoning layer does not invoke the token service directly at all; a separate trusted process attaches tokens, encrypts requests, and decrypts responses on its behalf.

Figure 2. Credential Isolation Architecture. Keys live outside the LLM context; the reasoning layer holds no signing keys, no session secrets, and no replayable credentials.

Single-use semantics at the protocol layer. A token that can be used more than once is a token that can be exfiltrated and replayed. Short TTLs are not a substitute for single-use. Thirty seconds is an eternity for an attacker running automation. Enforcement has to be at the resource server, atomically, keyed on a unique token identifier. Once presented, the token is dead.

Payload confidentiality bound to the legitimate caller. If the agent presents a valid token to an unintended endpoint, because a prompt injection convinced it to, the response must be unreadable to any recipient other than the legitimate client. And the request body itself is often sensitive, so it should be protected along the same path. This means per-invocation bidirectional encryption: request and response bodies are both encrypted under keys derived from a seed that is delivered inside the encrypted token body, never on the network in clear form. An attacker who intercepts the token wire format receives ciphertext they cannot decrypt in either direction. Data exfiltration through credential misdirection becomes futile even when the token is valid.

Authorization semantics richer than scopes. Scopes answer "is this client allowed to call this API." They do not answer "is this client allowed to call this API, right now, for this reason, on behalf of this human intent." What's required is task-level authorization: constraints embedded in the token describing the specific action, the specific resources, the specific caller context, and optionally the hash of the human-stated intent that motivated the request. This is harder than scope strings because it forces the system to reason about tasks, not just permissions.

Agent identity cryptographically distinct from human identity. When an agent authenticates by borrowing a human's delegated token, a prompt injection that convinces the agent to misuse that token is indistinguishable, at the protocol layer, from the human misusing it. The agent needs its own long-lived identity key. Human authority enters the system through policy evaluation at a trusted component, not through the agent's runtime holding human credentials.

Audit trails that capture intent, not just calls. Every token should carry a cryptographically verifiable record of what task it was issued for, what constraints applied, and what human authority chain produced it. Not a log line written after the fact, but a signed statement inside the token itself, verifiable at the resource server and preserved in the audit record.

Each of these properties is implementable. None of them are present in OAuth 2.1 plus current extensions. Some could be retrofitted; others require architectural changes that break OAuth's fundamental model (specifically: the assumption that tokens are bearer-authorized and presentation equals authority).

This is not an argument that OAuth is bad. It is an argument that OAuth was correctly designed for a different threat model, and applying it to autonomous agent runtimes is a category error that the industry is collectively making.

Where we're going with this

At Hawcx, we've been building toward the architecture this post describes. Our protocol, submitted as public input to the NIST NCCoE concept paper on AI agent identity and authorization earlier this year, implements credential isolation via OS-level process separation, single-use tokens with atomic consumption, bidirectional per-invocation payload encryption (both request and response bodies encrypted under keys derived from a seed inside the encrypted token body), task-based access control with constraints embedded in every token, and cryptographically distinct agent identity anchored in Ristretto255 public keys with X3DH mutual authentication.

The reference implementation is in Rust with a TypeScript SDK and integrates with MCP. The architecture is designed for sub-millisecond verification at the resource server on the standard verification path, achieved by moving the cost of authentication into an amortized session-establishment phase and keeping the per-request verification path minimal. And it coexists with OAuth rather than competing with it: where third-party SaaS APIs still require OAuth bearer tokens, a self-hosted bridge isolates and proxies those credentials so the agent never handles them directly. OAuth remains the right primitive for human-delegated access to APIs with trusted clients; Hawcx addresses the different problem of agent runtimes operating on attacker-influenced input.

The public comment we submitted to NIST and the canonical protocol specification will be made available shortly. We're in active conversations with several of the largest AI platform companies and a number of regulated-industry deployments where the prompt-injection credential-abuse threat is most acute. If you're building agent infrastructure at scale, or deploying agents against data your regulator cares about, we should talk.

The bigger point

OAuth is not going away. It shouldn't. For human-delegated access to APIs with trusted clients, it remains the right answer, and the work happening in the OAuth 2.1 and MCP communities to extend it for agent use cases is genuinely valuable for the large class of deployments where the agent runtime can be trusted.

But the class of deployments where the agent runtime cannot be trusted is growing faster than any of us are comfortable admitting. Customer service agents reading attacker-influenced tickets. Code review agents ingesting untrusted pull requests. Research agents crawling the open web. Email agents processing incoming messages. Every one of these is a deployment where the agent's input is attacker-controllable, and every OAuth defense we have assumes that possibility away.

The incident described at the opening of this post is composed from documented attack patterns. It is not speculative. The credential stuffing is commonplace; the prompt injection technique is published research; the architectural gaps are real. Most consequentially, the first real-world zero-click prompt injection exploit in a production LLM system has already happened. In June 2025, Microsoft assigned CVE-2025-32711 (CVSS 9.3) to "EchoLeak," a vulnerability in Microsoft 365 Copilot disclosed by Aim Labs that enabled remote, unauthenticated data exfiltration from a user's Copilot context via a single crafted email, with no user interaction required. The exploit chained a prompt injection past Microsoft's own cross-prompt-injection classifier, bypassed link redaction via reference-style Markdown, and exfiltrated data through a Microsoft Teams proxy that the content security policy trusted. Every authentication control worked. Copilot's OAuth tokens were valid. The data still left the building. The only fictional element in the scenario above is the name of the bank.

The industry has roughly an eighteen-month window to converge on an agent authentication architecture that takes the threat model seriously before the first major public breach locks in whatever default exists at that moment. We'd rather that default be something designed for the problem than something retrofitted around it.

If you're a security engineer evaluating this space, the question to take back to your team is not "which OAuth extension do we adopt for our agents." The question is "what does our authorization architecture assume about the trustworthiness of the agent runtime, and is that assumption still defensible?"

The answer, most places, is no. The good news is that's a solvable problem. The less good news is that the solution is not a scope string.

Written by the Hawcx team. We build authentication and authorization infrastructure for autonomous AI agents. If you're working on these problems, whether as a builder, a researcher, or a security engineer trying to harden an existing deployment, we'd genuinely like to hear from you. riya@hawcx.com.